From VR audio experiments to casual gaming in VR on an arcade machine up to more serious usage to create new ways of collaboration using either AR or VR, you should have a pretty good understanding of what you can do today after reading this.

Indeed, in this article, I’ll share lot of fun experiments I’ve been working on to build either immersive or augmented reality WebXR experiences using Babylon.js as well as more serious / business scenarios. You’ll be able to learn by experimenting & reading the source code of each demo. If you don’t have yet a compatible device, I’ll share some fallback as well as videos using the Valve Index, Oculus Quest 2 or HoloLens 2.

If you prefer to discover what’s possible to build with WebXR today watching a video, you can go to this 20 min conference I’ve done: Building Fun Experiments with WebXR & Babylon.js by David Rousset – GitNation that will cover what’s explained below.

Babylon.js is a free & open-source 3D engine built on top of WebGL and WebGPU. It supports Web Audio & WebXR out of the box. You just have to focus on the experience or game you’d like to build, we’ll manage the complexity of many Web APIs for you.

It’s being used at Microsoft in many of our products such as Microsoft Teams, PowerPoint, Bing, SharePoint, our Xbox Streaming service (xCloud) and others. It’s also used by some of our big partners like Adobe for instance.

WebXR?

WebXR is a Web API enabling both Virtual Reality (VR) and Augmented Reality (AR) scenarios. I’m expecting it to be more and more popular as it will be one of the major building blocks of the metaverses on the web. You should have a look!

To know more about this specification:

- WebXR Device API (immersive-web.github.io)

- The Immersive Web Working Group/Community Group | immersive-web.github.io

- Using WebXR with Windows Mixed Reality – Mixed Reality | Microsoft Docs

- WebXR | ARCore | Google Developers

- Fundamentals of WebXR – Web APIs | MDN (mozilla.org)

To be able to use it, you need a compatible headset such as the Valve Index, Oculus Quest 2, Windows Mixed Reality headsets, HoloLens 2 or any SteamVR compatible headset for VR and an Android smartphone or HoloLens 2 for AR. For the browser, you need a Chromium-based one such as Google Chrome, Microsoft Edge, Opera, Samsung Internet, Chrome for Android or the Oculus Browser.

For Babylon.js, our documentation entry point is there: WebXR | Babylon.js Documentation (babylonjs.com)

The XR Experience Helper

Babylon.js offers a full complete VR experience via a single line of code. It will transform an existing scene VR compatible, will offer teleportation (you must provide the name of the mesh acting as the floor) and will display the right models of the current controllers being used.

For instance, to immerse yourself into a famous sequence of Back To The Future, navigate to this URL: https://playground.babylonjs.com/#TJIGQ1#3 and have a look to the code. You’ll notice the magic happens thanks to this line of code:

var xr = await scene.createDefaultXRExperienceAsync({floorMeshes: [scene.getMeshByName("Road1"), scene.getMeshByName("Herbe1")]});

Where 2 objects can support the teleportation target: ‘Road1’ and ‘Herbe1’.

If you have a compatible browser and a WebXR compatible device connected, you’ll see a VR icon displayed on the bottom right:

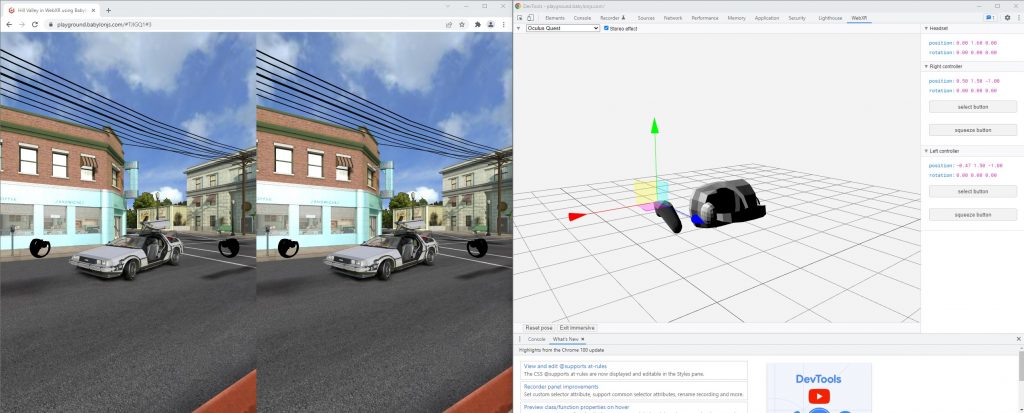

If you don’t have a compatible device, you can try to install this Chrome Extension: WebXR API Emulator – Chrome Web Store (google.com) that will emulate a WebXR device. Open the developer tools and you’ll be able to simulate someone using a VR headset:

Here’s a video showing the full experience using a Valve Index in Microsoft Edge on Windows 11:

You can see that you can move using the teleportation target like inside any classical VR game and you can even choose where to face when landing.

Please note that the laser enabled on the controller will generate a Pointer down even when the trigger button will be pressed. This means you can design your 3D scene to work with mouse or touch during the conception and it will be automatically compatible with our VR Experience Helper.

By the way, if you love this scene like I do, have a look to the same version but compatible with WebGPU. If you run it inside a WebGPU compatible browser, you’ll have up to 10x performance gain!

The VR Arcade machine

I love building small video games, I love arcade machines and I love VR. So, I’ve naturally mixed all of that into a single demo.

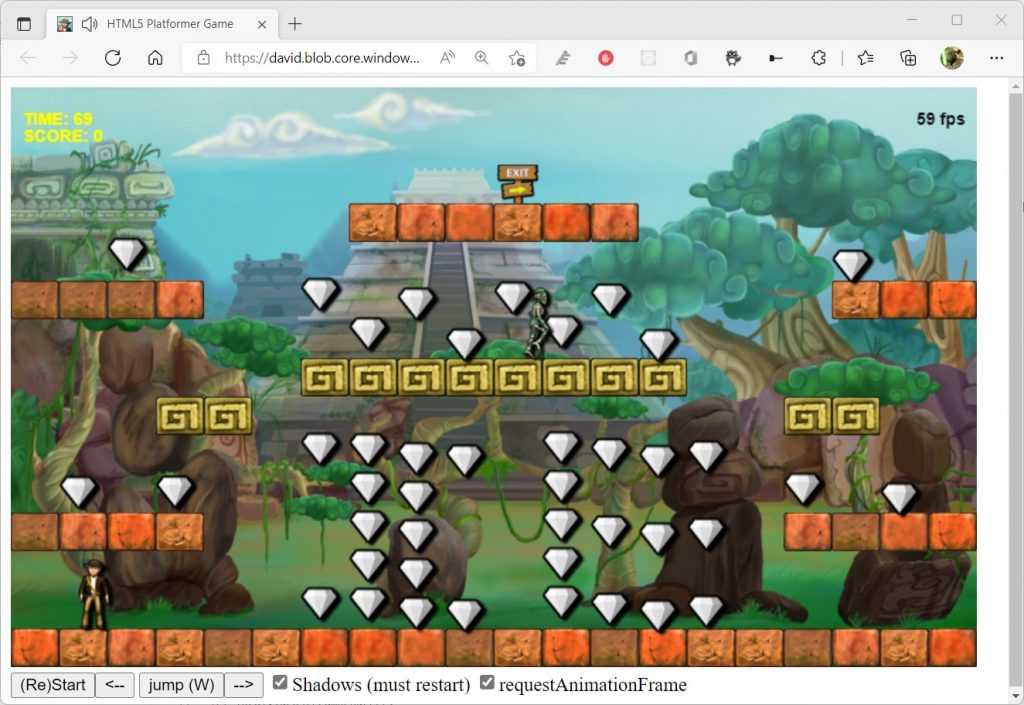

First, you can have a look to the original 2D Canvas game I’ve ported years ago:

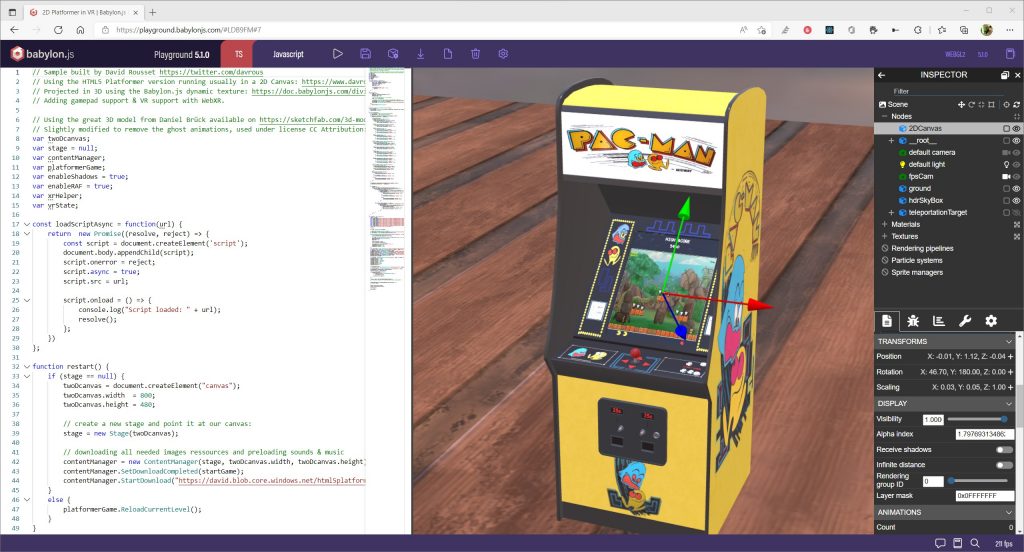

I then simply took this 2D canvas to render it on a 2D plane in the 3D canvas in Babylon.js. Indeed, you must render everything in the WebGL canvas to be able to view & interact with elements once in VR. Classical HTML elements are not projected in the 3D canvas in VR.

Babylon.js supports the 2D canvas via the Dynamic Texture: Dynamic Textures | Babylon.js Documentation (babylonjs.com).

I then just had to position the plane on top of the arcade machine model. I downloaded the model from Sketchfab.

If you need help positioning objects in the scene, I’m strongly encourage you to use our inspector tool:

You can find the source code and the demo ready to be played there: https://playground.babylonjs.com/#LDB9FM#7. You can play to the platformer game using the keyboard, a gamepad controller or, of course, your VR controllers once in entered in immersive mode.

Here are 2 videos showing the experience, the first one using the Valve Index, the second one using the Oculus Quest 2.

Valve Index

Meta Quest 2

Virtual 360 VR Piano

I love composing music and I still love VR. So once again, I had to combine both! By the way, you can listen to my music composition on my Sound Cloud: Stream David Rousset music | Listen to songs, albums, playlists for free on SoundCloud.

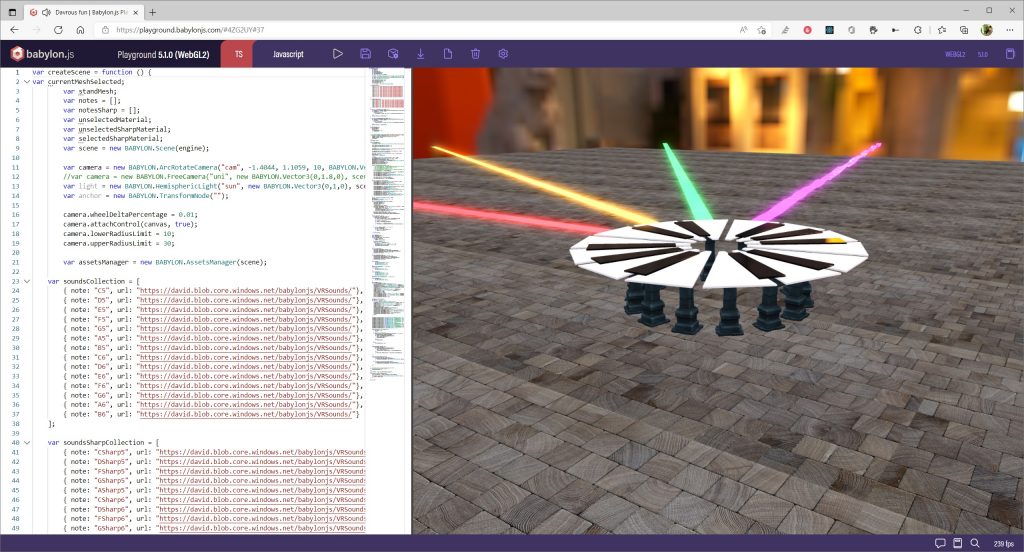

I’ve then decided to create 360 piano and associate notes to each key using our Web Audio support. I’ve also used the Web Audio analyser feature to display the wave of each notes on a ribbon.

You can try it even with a flat 2D screen there: https://playground.babylonjs.com/#4ZG2UY#37

To make it work in VR, you simply need to uncomment line 12 and comment line 11. The camera will then be at the center of 360 piano.

Meta Quest 2

Here’s a video (of a slightly previous version of my code) inside the Meta Quest 2:

HoloLens 2

The same demo works also inside a HoloLens 2. I’ve slightly modified the code to make it better for HoloLens by removing the skydome and replacing it with a black texture. On the HoloLens 2, all pure black textures will become transparent so you can see through. You can try it on your HoloLens 2 if you’ve got one: https://playground.babylonjs.com/#4ZG2UY#42

Here’s a video of me trying it in my HoloLens 2:

You can see that we have hands tracking support available in Babylon.js. I’m then simply taping my fingers to generate a pointer down on the keys to trigger the notes. Once again, you simply have to make your demo work with mouse & touch, and it will work in VR.

AR Magical Orb Demo

I haven’t done this demo but it’s really super cool. This sample code will use the WebXR AR feature to analyze your environment and let you place an orb on a flat surface.

You can read the code & try the demo on a AR compatible device (HoloLens 2 or an Android smartphone) from there: https://aka.ms/OrbDemoAR

Here’s a video of myself testing it with my Samsung S8:

More serious scenarios

Of course, WebXR is not just made for fun & gaming experiences. To be honest, it’s probably even more used today for “serious” scenarios (even if for me, gaming is a serious business).

eCommerce

First, WebXR, and its AR feature, is interesting for eCommerce scenarios. I would encourage you to read this great article on the Babylon.js blog: WebXR, AR and e-commerce: a Guide for Beginners | by Babylon.js | Medium. It contains a demo you can try on your Android smartphone (or HoloLens 2): https://aka.ms/AREcommerce

Watch the result:

Metaverse / Virtual Visit

I’ve also been working recently on building a “Metaverse” demo where I was able to call someone using Microsoft Teams from a VR scene using Azure Communication Services (a CPaaS running on top of WebRTC) aka ACS. The idea was to experiment a concept where you could visit a house for instance helped by a sales representative connecting from Microsoft Teams.

Here’s a video of the demo running on my Valve Index:

I’ve first built a prototype in the Babylon.js Playground: https://playground.babylonjs.com/#JA1ND3#539 where you can navigate into the scene, press the “Call” button and experience a fake video. You can click on the “A” button of an Oculus controller to put the video on top of the left controller.

Then I’ve integrated the ACS JavaScript SDK to get the video streamed by the ACS infrastructure from Microsoft Teams. You can try the sample & read the code from my Github repo: GitHub – davrous/acsauth: Deploy in less than 10 min an Azure Communication Services sample to be shared & tested with your colleagues & friends. You’ll find the complete instructions to setup it in the readme.

Metaverse integrated inside Microsoft Teams

I’ve also been working with the awesome people from FrameVR who are using Babylon.js to build their custom Metaverse solution. They have recently published their app in the Microsoft Teams apps store and shared about it: Frame Blog: Bringing the Metaverse to Microsoft Teams (framevr.io).

Have a look to the result:

Other Microsoft products

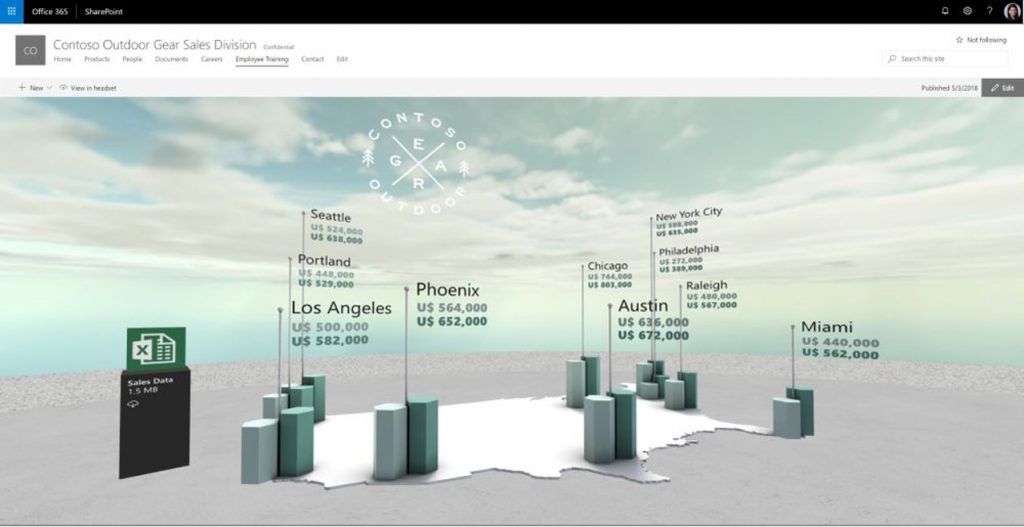

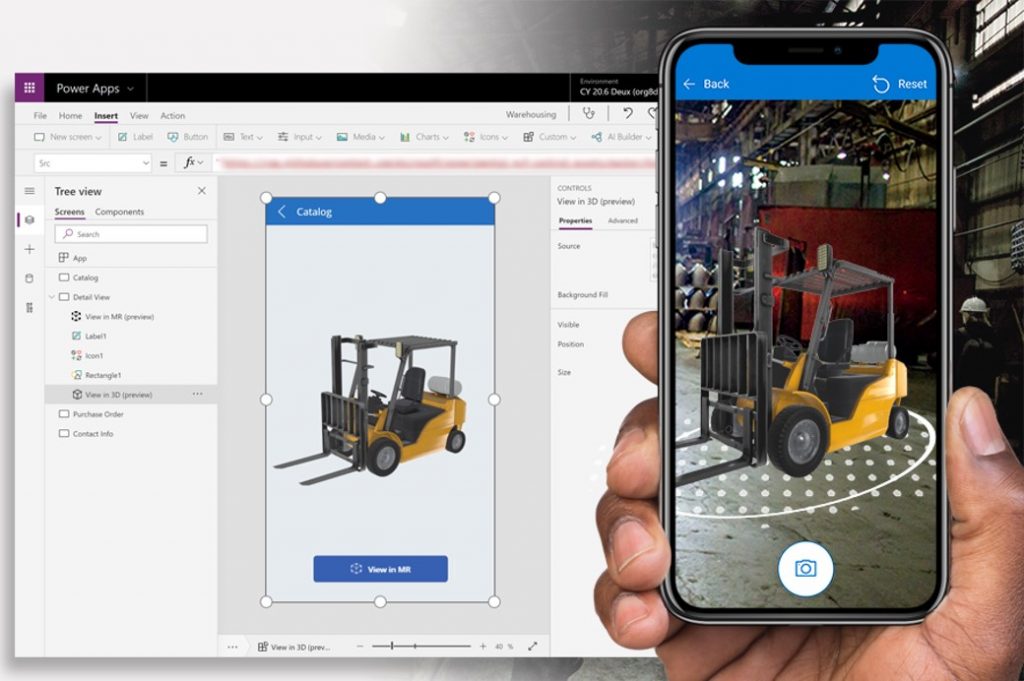

Babylon.js and its WebXR support is also used inside SharePoint Spaces or Power Apps: Use the 3D object control in Power Apps – Power Apps | Microsoft Docs.

You then see that WebXR is ready for prime time!

I hope this article and the various demos with their associated code will help you to better understand what can and could be done using WebXR. I hope it will inspire you to build great immersive or augmented reality experiences!

If you’ve got questions, please ping me on Twitter: https://twitter.com/davrous or ask them directly to the Babylon.js community: https://forum.babylonjs.com/